Over a year ago, I wrote a blog about Multiple tier architecture and continuous delivery with Power BI, mainly an overview of the setup and manually creating development and production content. This blog will continue this story in more detail of Power BI CI/CD with Azure DevOps automated deployments. A solution where Dave Ruijter, Ton Swart and I worked on over the last year. The second co-authored blog with Ton Swart, read along!

Please also check the updated blog with the most recent version of the DevOps Extension.

Multi-tier setup

Short recap of what I’ve written before on this topic. The multi-tier setup, also known as DTAP (development, test, acceptance and production), is a much-used setup in IT. Identical to what we do while developing Power BI content in a managed scenario.

In software development, we work with a continuous integration process which helps us to automatically merge artifacts and let us all work with one code base, as well as continuous delivery to automatically deploy our solutions. This type release management process is supported by a Microsoft service, Azure DevOps.

Azure DevOps (formerly known as TFS or VSTS) provides version control, automated builds, release management and lots more! By working with Azure DevOps, we need to save our pbix files in a central place where it is accessible for the DevOps service to be deployed. In the next chapter we go in more detail on a central storage and versioning of your Power BI files in relation to Azure DevOps.

The setup below, is where we will elaborate on in the next chapters. This includes all parts like versioning in Git, continuous delivery and how you can set this up yourself!

Click to show the image full screen

Version history

Versioning of Power BI files can be an issue. Where do you save your pbix files and how do you make sure that these files will be included in a back-up, or even more often saved as intermediate results. This can be done more efficient. Depending on the size of your project, you can go for a low-level easy solution with OneDrive or professionalize it with Git!

OneDrive / SharePoint sync

One of our best practices listed on the Power BI Cheat Sheet, is to save your pbix files on SharePoint or OneDrive. Simply, because this helps you to at least have backups. In your day to day work with Power BI files this can be unhandy, because you want to stay away from manually uploading and downloading the pbix files every time you have a new version. Luckily, the SharePoint Sync option can help you with simply synchronizing the files between your local system and SharePoint or OneDrive. Read all about this option in the Microsoft documentation.

Still using a classic Power BI workspace? Pleas know that it actually is an Office365 group including additional resources like SharePoint. You can use this SharePoint location to save and sync your pbix files! Be aware of the differences in the classic and new workspaces! In my opinion you should always go for the new experience workspaces from now on because of all additional functionality. Adam Saxton (Guy in a Cube) explains the differences in workspaces in this video.

The approach of saving your pbix files on SharePoint or OneDrive works fine for small projects. But working in an enterprise organization, or Azure DevOps requires a more professional solution, which we can do by using Git repositories for versioning.

Git repositories

As a developer we might be used to working with Git repositories, especially in order to have release management in place. Git is well known as a modern version control system. By using Git, you will have a local copy of the code on your machine as well. Based on these local copies, you can continue developing. After you’re finished with your work, you can easily push your local repository to merge with the online (shared) repository. By doing this, only the changes will be pushed and saved in the online repository. In fact, only for the new code there will be a new version created.

Versioning of Power BI files is a whole different story. Since pbix files are binary files, there is no way of checking-in only the code changes. The process of pushing changes identifies the pbix file as one object which has a new version.

Because your pbix file also includes the imported dataset, the files can become very large! (also check my other blog about improve report building) Git is not very good in handling big files by default, but luckily there is a Git extension called Large File Storage (LFS). This extension is used to manage large files in a separate Git repository. To keep things well performing, the main repository will only include pointers to the actual locations. For every file you will save, Git LFS creates a pointer and saves the binary file (pbix file in our case) as a blob (binary large objects).

So far, the Continuous Integration setup. But how does this all relate to continuous delivery of Power BI content? Azure DevOps is a Microsoft cloud service to collaborate in your team and ship deliverables faster by automating. Since this is running from a cloud perspective, the artifacts need to be stored somewhere central, which is the Git repository.

Continuous Delivery with Azure DevOps

API’s seem to be everywhere these days. Every popular online service has one, therefore also has the Power BI service. API’s allow developers to interact with online services without actually having to point and click their way through a UI. Instead, many services offer APIs (in particular, REST APIs) that offer up an industry-standard way of exposing information to the world.

When using PowerShell to work with REST APIs, you’ll eventually come across two commands: Invoke-WebRequest and Invoke-RestMethod. Both of these commands send and receive HTTP data to/from various HTTP endpoints and can be used to interact with REST APIs. In a nutshell, the Invoke-RestMethod command is everything Invoke-WebRequest is, but with more built-in JSON parsing. Because of this we are using the Invoke-RestMethod. The Invoke-RestMethod also includes some other useful features when working with REST APIs such as authentication. While the built-in Powershell cmdlet Invoke-RestMethod is a great way to explore an API it expects you to know how to deal with HEADERS, Body payload, Authentication etc.

Because we want to focus on the flow of the script (ie creating workspaces, importing reports, changing parameters and datasources) instead to bother about HEADERS, Body payload, Authentication etc, we created a PowerShell module which wraps all the Power BI REST API call’s into cmdlets we can use in our scripting. So instead of using the Invoke-RestMethod we can create a workspace using the cmdlets. For example, New-PowerBIGroup -Name “DP_DEMO_dev”.

To take it even up a notch, how cool would it be if we can use our PowerShell module to create a custom task, we can use in our Azure DevOps build- and release pipelines? Very cool! So we did! We created our custom tasks for creating Power BI workspaces, importing Power BI reports and changing a connection or parameter.

Setup in DevOps

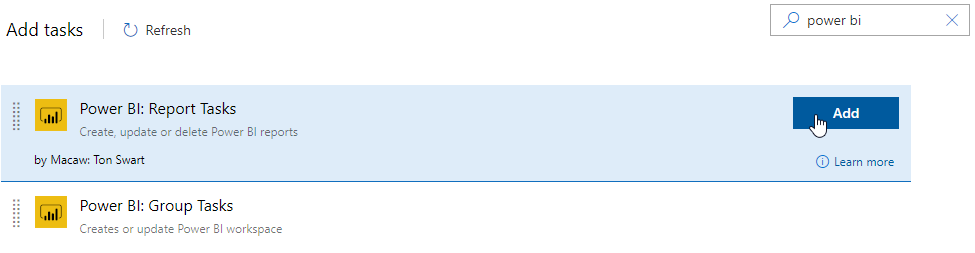

First install the “Macaw Power BI Extensions” from the Azure DevOps Marketplace in your Azure DevOps organization.

Register an application with Azure AD to allow your application access to the Power BI REST APIs. Follow the steps on the Microsoft documentation here, using the Native application type.

Git repository

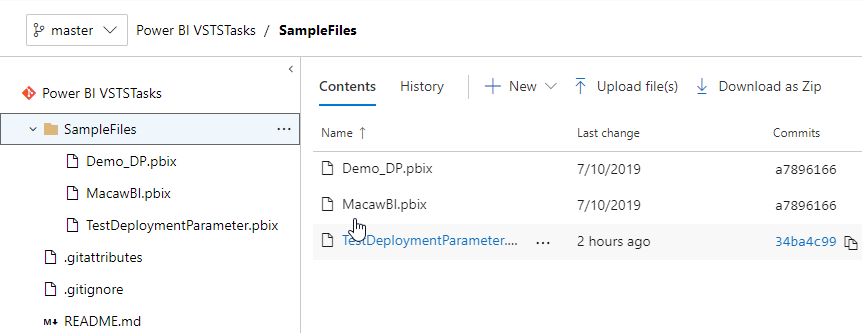

For the deployment of the Power BI files, place some *.pbix files in the SampleFiles folder of your Git repository.

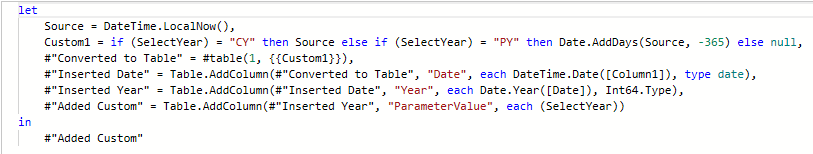

Let’s take an example. We uploaded the Power BI file “TestDeploymentParameter.pbix” which is a Power BI report with a simple date query.

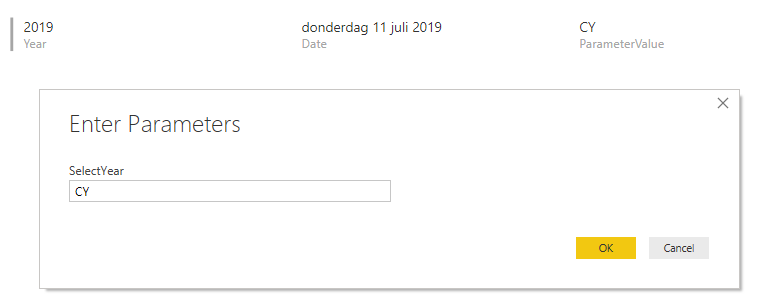

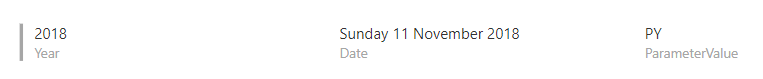

We simply control the date displayed in the report with the “SelectYear” report parameter. Value “CY” shows the current year date and “PY” is used for displaying the previous year date (also check this blog about parameterize your datasource).

Continuous Integration

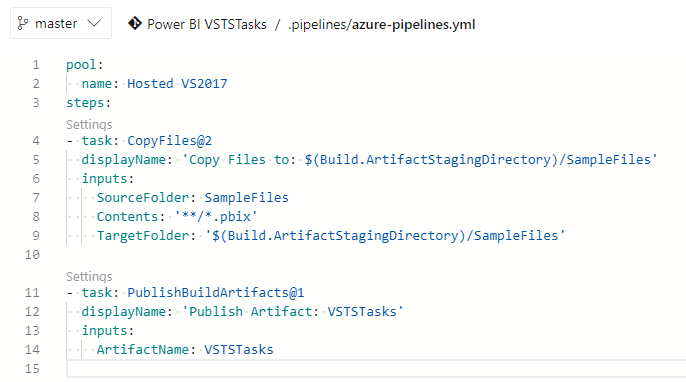

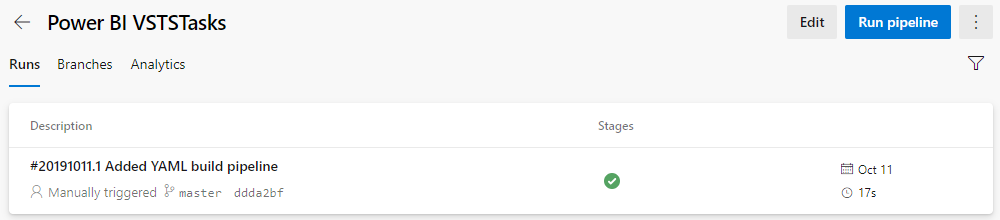

To (automatically) build and create an artifact (package) of your code (ie pbix file), create a build pipeline in Azure DevOps.

When you change and push your code to your version control repository, the build pipeline kicks off the default trigger to build your application.

Continuous Delivery

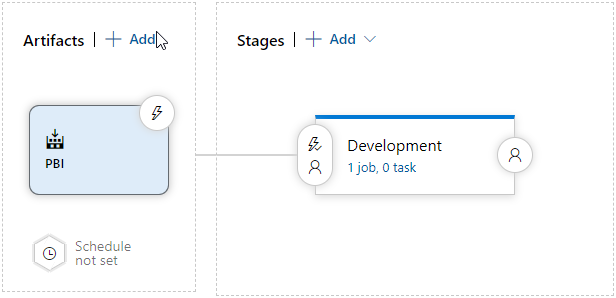

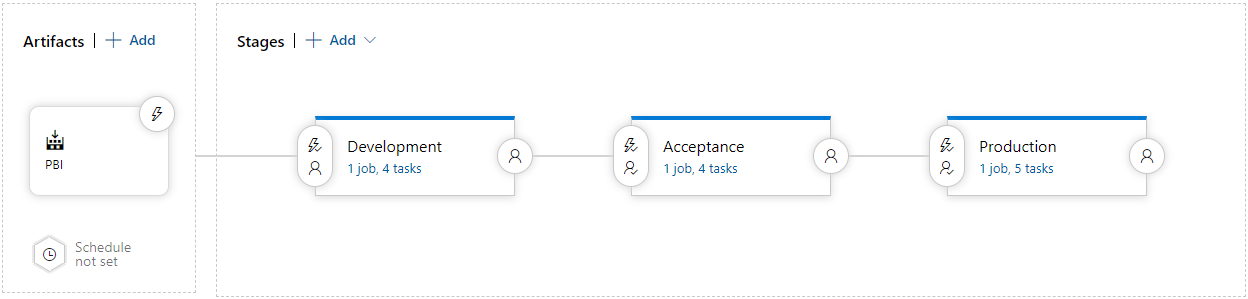

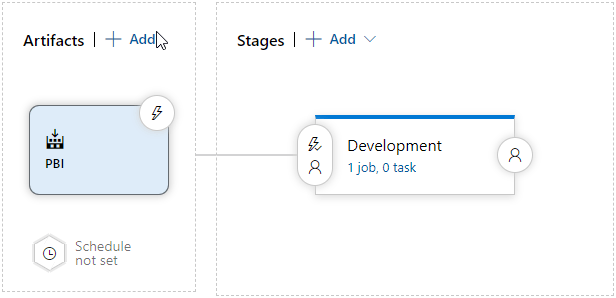

Now the extension is installed and we have an artifact containing some Power BI files, we can begin building our release definition. Let’s start with a development stage and adding the build artifact from the previous step to the release definition.

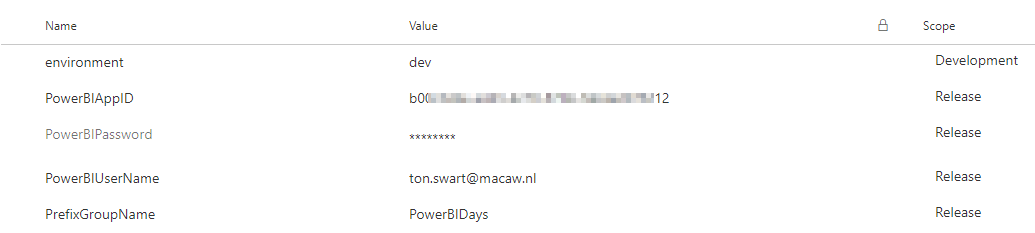

Create some pipeline variables

As the name suggests, the value of a variable may change from run to run or job to job of your pipeline. Almost any place where a pipeline requires a text string or a number, you can use a variable instead of hard-coding a value. The system will replace the variable with its current value during the pipeline’s execution. Variables can be scoped on a stage or a release. Use a stage-level variable for values that vary from stage to stage like “environment”.

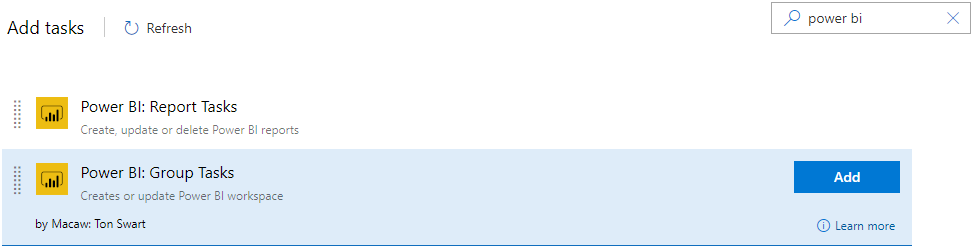

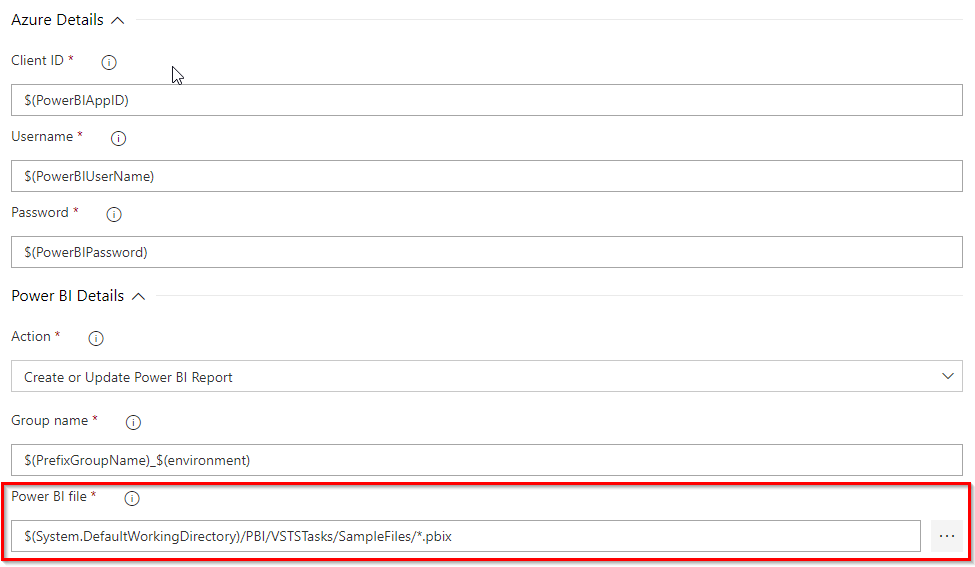

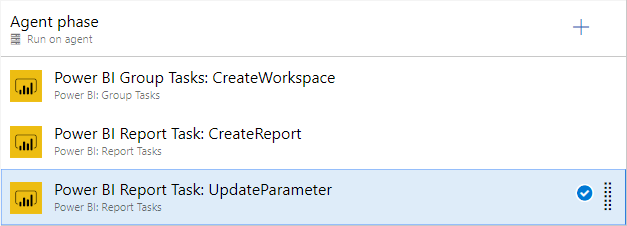

Creating a Power BI workspace

Start the release pipeline by adding a “Power BI: Group Tasks”, for creating a Power BI workspace, to the release definition. Please know that the group task creates a new experience Power BI workspace by default. Unless it is called group, it doesn’t create a O365 group.

Then configure this task using variables like $(PowerBIAppID) created in the previous step, to configure the “Client ID” property and $(PrefixGroupName)_$(environment) for the Power BI workspace name.

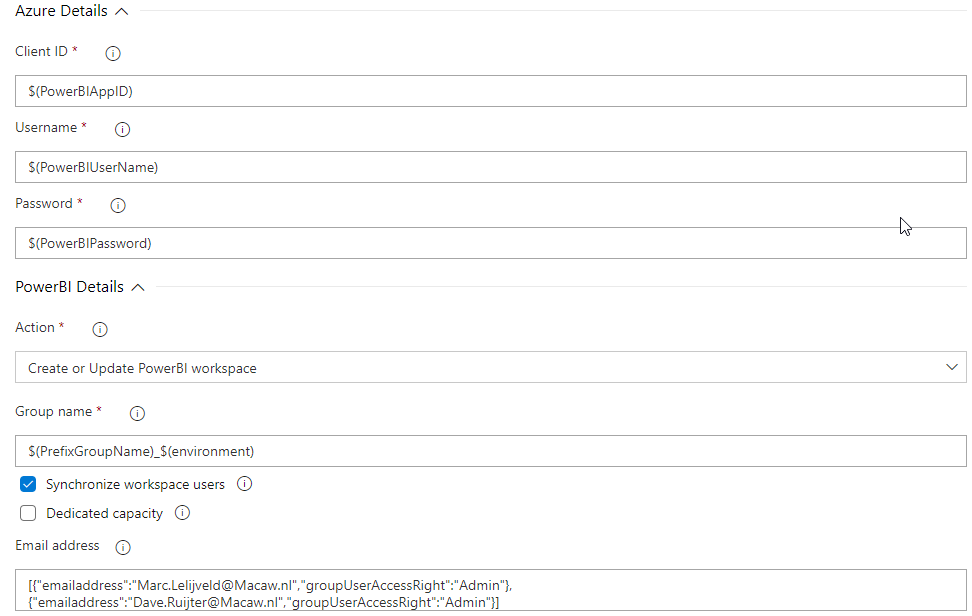

Publish Power BI report

Add another step to the release pipeline to publish Power BI files from the artifact by adding the “Power BI: Reports Tasks” custom task.

Then again, configure this step. You can select the Power BI file(s) from the artifact using the […] button.

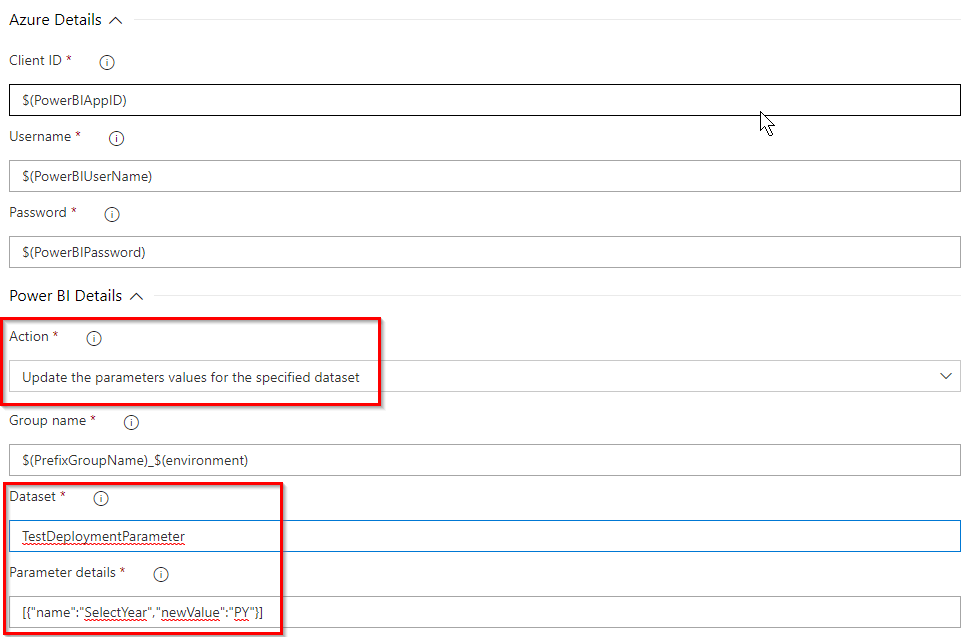

Changing a Power BI dataset parameter

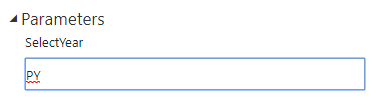

Now, let’s change the dataset parameter “SelectYear” in the Development stage from “CY” (Current Year) to “PY” (Previous Year).

Once again, add another “Power BI: Reports Tasks” step to your release pipeline. Configure this task using the action “Update the parameters values for the specified dataset” and enter the “Dataset” and “Parameter details” parameters. By repeating the above steps a few times, your pipeline could look like this:

By repeating the above steps a few times, your pipeline could look like this:

And the definition in your Development stage could look similar to:

Create a release

When all configuration is done, creating a release will automatically create a workspace in PowerBI.com, upload the report(s) and change the dataset parameter “SelectYear”. Let’s try!

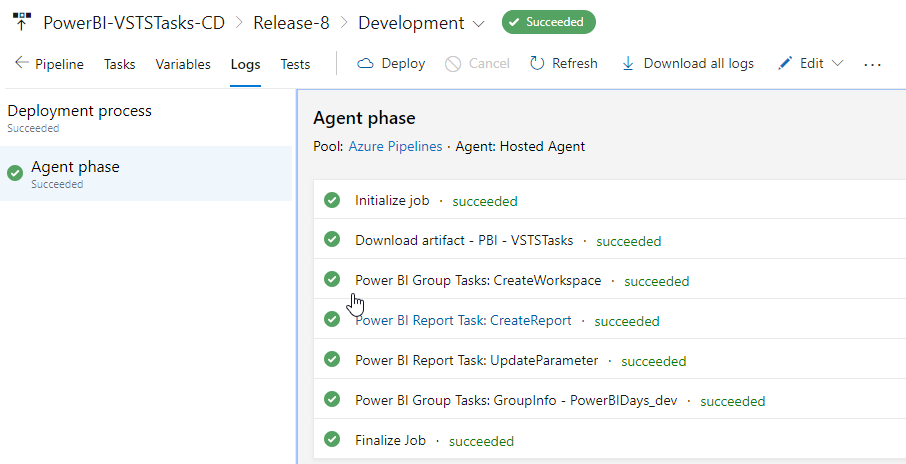

Check your deployment logs and verify that every step was succeeded.

To actually see if the pipeline has indeed changed the dataset parameter, login into PowerBI.com and verify that the dataset parameter “SelectYear” has changed to “PY”.

And last but not least check the report. That worked!

Wrap up and remarks

According to our way of working, this approach is a real added value. By deploying our Power BI content from a DevOps perspective, we can easily move our artifacts (pbix files) from workspace to workspace. We can even roll-back to an earlier version of the artifact in the unlikely situation that we messed-up somewhere. All based on our versioning in Git.

Of course, the end-to-end process needs to be customized according to your own way of working. With the extensions available in the Azure Marketplace, you can easily add it to your own processes. It is definitely most powerful once you’re combining it with deployment of other components. Let’s say, your data platform or other things that can be deployed with Azure DevOps.

Though, there are some really interesting things on the Power BI roadmap, which might (partly) overlap with this solution. The following two can be really interesting:

Both are shown in demos or videos at conferences by Microsoft. In our opinion, none of them covers everything end to end including versioning or as part of existing deployment pipelines. At least, not yet… However, they look very promising, definitely keep an eye out for them.

Resources

- Azure Marketplace to download extensions

- Basic versioning of files with SharePoint / OneDrive sync

- Register an Azure AD application to use with Power BI

- Difference in classic and new Power BI workspaces

Co-author

This blog is co-authored by my colleague, Ton Swart. Ton is a Data & Analytics DevOps Engineer at Macaw and has over 12,5 years of experience in IT. This includes roles as BI Consultant, Data warehouse specialist and IT consultant. His focus is on Business Intelligence. A few of Ton his expertise is CI/CD with Azure DevOps, PowerShell as automating Power BI deployments.

I’ve been playing with this too, but ran into the issue of authentication. For some operations you have to have a Power BI account, however, MFA cannot be enabled on it. The best solution would be building automated release pipelines user service principals, however, these cannot publish reports for instance. How did you solve this?

LikeLike

Hi Bas,

Take a look at this extension. It has already been there for a long time and support more features and the service principal authentication you are looking for.

https://marketplace.visualstudio.com/items?itemName=maikvandergaag.maikvandergaag-power-bi-actions

LikeLike

Also with your extension I get the “unauthorized” error. My service principal has all the API rights, and in the Power BI admin center I’ve enabled service principals for the entire org… Should work, no?

LikeLike

Hi Bas,

Unauthorized mostly has something to do with the permissions granted. Only selecting the permissions in the App Registation isn’t enough. Did your admin also grant the permissions to this app registration? It is called Grant Admin Consent. Check the documentation here: https://docs.microsoft.com/en-us/azure/active-directory/manage-apps/configure-user-consent

Best, Marc

LikeLike

I definitely had granted admin permissions. In the end, something went wrong with adding the SP to the workspace. Now that’s all good and the deployments work. Thanks for sharing your ideas and knowledge!

LikeLike

Hi Bas,

First of all, thank you for reading our blog.

And it is possible to use a service principal for deploying to Power BI using the Power BI REST API. You have to enable it in the Power BI Admin portal.

Source: https://docs.microsoft.com/en-us/power-bi/developer/embed-service-principal

Unfortunately, this option is not available in the “Macaw Power BI Extensions” tasks available in the Azure DevOps Marketplace and used in our blog.

It is on the backlog and is work in progress.

Regards,

Ton.

LikeLiked by 1 person

Pingback: Using Azure DevOps for Power BI CI/CD – Curated SQL

Pingback: Top Stories from the Microsoft DevOps Community – 2019.11.15 - Microsoft Today

Also check out this extension: has a lot more history and features. It also supports service principal authentication.

https://marketplace.visualstudio.com/items?itemName=maikvandergaag.maikvandergaag-power-bi-actions

LikeLike

Hi any ideas of how to handle this for power bi report server?

Thanks!

LikeLike

Hi Christian,

I don’t have that much experience with Power BI Report Server. Can you elaborate a bit more on what exactly you’re looking for?

– For deployment, have a look at the Power BI Report Server APIs: https://docs.microsoft.com/en-us/power-bi/report-server/rest-api

– For versioning, the same applies as for any other Power BI content. This can be done with a git repo or even SharePoint or OneDrive.

Hope this gives you some ideas on how you can customize the process to work for Report Server.

Cheers,

Marc

LikeLike

Pingback: Why you should care about Power BI Deployment Pipelines – Data – Marc

Pingback: Leverage Power BI Deployment Pipelines with Premium per user licensing – Data – Marc

Wonderfull article, but any thought on how we could handle this with Shared Datasets.

In that part PBI fails me a lot.

We have Workspaces containing DataSets and Some DataFlows. Always in 3 versions. Dev/UAT/Prod. Why, not only some datasets get extra fields but also not everybody in the company is allowed to see production data.

In parallel we have our report Workspaces/Apps, also Dev/UAT/Prod, but we can’t find a decent way to ‘re-point’ from DEV to UAT (as example), all has to be done manually, and this is not easy.

Such a pitty PBI doesn’t allow us just to change the ‘source-workspace’ and keep all DS names.

Any suggestion on this WoW ?

LikeLike

APIs to the rescue here! With the Power BI REST API, you can swap connections, Rebind reports to other datasets and many more!

As you probably want to automate this, you can integrate these steps in a PowerShell script and let it run as part of your deployment pipeline in Azure Devops if you like.

Cheers,

Marc

LikeLike

Pingback: Integrate Power BI Deployment Pipelines with Azure DevOps – Data – Marc

You write this post very carefully I think, which is easily understandable to me. Not only this, but another post about devops is also good. As a newbie, this info is really helpful for me. Thanks to you.

LikeLike

Pingback: Power BI Workspace setup – Part 1 – Data – Marc

Hi,

I am trying to build the same solution but I am getting the error in release pipeline under “Power BI report Task : Create report” task

error :

The property ‘error’ cannot be found on this object. Verify that the property exists.

I was looking the connection and everything but couldn’t find the cause of this.

Could you please help me to know.

Regards

LikeLike

Hi Himanshu,

You can try to add a pipeline variable system.debug and set it’s value to true.

Kr. Ton.

LikeLike

Here is catch but can’t able to understand.

2022-05-19T08:07:20.2327726Z Done processing Power BI report deployment tasks

2022-05-19T08:07:20.2389352Z ##[debug]Leaving D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\reportTasks.ps1.

2022-05-19T08:07:20.2514798Z ##[debug]Caught exception from task script.

2022-05-19T08:07:20.2568641Z ##[debug]Error record:

2022-05-19T08:07:20.3149979Z ##[debug]The property ‘error’ cannot be found on this object. Verify that the property exists.

2022-05-19T08:07:20.3168242Z ##[debug]At D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\ps_modules\power.bi.cicd.tools\private\Invoke-PBIToolAPI.ps1:39 char:21

2022-05-19T08:07:20.3195596Z ##[debug]+ … if ($errorContent.error.code -eq “AddingAlreadyExistsGroupUse …

2022-05-19T08:07:20.3224882Z ##[debug]+ ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~

2022-05-19T08:07:20.3226161Z ##[debug] + CategoryInfo : NotSpecified: (:) [], PropertyNotFoundException

2022-05-19T08:07:20.3240809Z ##[debug] + FullyQualifiedErrorId : PropertyNotFoundStrict

2022-05-19T08:07:20.3261789Z ##[debug]

2022-05-19T08:07:20.3293810Z ##[debug]Script stack trace:

2022-05-19T08:07:20.3344628Z ##[debug]at Invoke-PBIToolAPI, D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\ps_modules\power.bi.cicd.tools\private\Invoke-PBIToolAPI.ps1: line 39

2022-05-19T08:07:20.3364973Z ##[debug]at GetWorkspace, D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\ps_modules\power.bi.cicd.tools\private\GetWorkspace.ps1: line 13

2022-05-19T08:07:20.3408896Z ##[debug]at Start-PBIToolUploadReport, D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\ps_modules\power.bi.cicd.tools\public\Start-PBIToolUploadReport.ps1: line 22

2022-05-19T08:07:20.3429313Z ##[debug]at , D:\a\_tasks\reportTasksV2_e42cbc98-47bd-48a7-8da5-258698afc839\2.0.7\reportTasks.ps1: line 74

2022-05-19T08:07:20.3451224Z ##[debug]at , : line 1

2022-05-19T08:07:20.3470746Z ##[debug]at , : line 22

2022-05-19T08:07:20.3489716Z ##[debug]at , : line 18

2022-05-19T08:07:20.3553365Z ##[debug]at , : line 1

2022-05-19T08:07:20.3582210Z ##[debug]Exception:

2022-05-19T08:07:20.3648144Z ##[debug]System.Management.Automation.PropertyNotFoundException: The property ‘error’ cannot be found on this object. Verify that the property exists.

2022-05-19T08:07:20.3665523Z ##[debug] at System.Management.Automation.ExceptionHandlingOps.CheckActionPreference(FunctionContext funcContext, Exception exception)

2022-05-19T08:07:20.3814493Z ##[debug] at System.Management.Automation.Interpreter.ActionCallInstruction`2.Run(InterpretedFrame frame)

2022-05-19T08:07:20.3817099Z ##[debug] at System.Management.Automation.Interpreter.EnterTryCatchFinallyInstruction.Run(InterpretedFrame frame)

2022-05-19T08:07:20.3819878Z ##[debug] at System.Management.Automation.Interpreter.EnterTryCatchFinallyInstruction.Run(InterpretedFrame frame)

2022-05-19T08:07:20.4086142Z ##[error]The property ‘error’ cannot be found on this object. Verify that the property exists.

2022-05-19T08:07:20.4100556Z ##[debug]Processed: ##vso[task.logissue type=error]The property ‘error’ cannot be found on this object. Verify that the property exists.

2022-05-19T08:07:20.4111736Z ##[debug]Processed: ##vso[task.complete result=Failed]

2022-05-19T08:07:20.4514945Z ##[section]Finishing: Power BI Report Task: CreateReport

LikeLike

Pingback: Self-Directed Business Intelligence Learning Resources – EXCEL BASEMENT

Pingback: Source Control in Power BI – What are your options? – Data Ascend