Last week, I was challenged by moving my Power BI dataset through the next stage in my DTAP pipeline. I simply wanted to re-assign my connections to the data source in the next stage. In my case, I created a separate workspace including separate dataflows for each stage. Since de dataflows connector in Power BI is calling some Ids hard coded in the Power Query code, I was forced to manually change these Ids. In my opinion there should be an easier way to do this…

The PowerBI.Dataflows(null) connector?

The dataflows connector in Power BI desktop is a default connector. Once we connect, you will get an overview of all your workspaces which does include dataflows and all underlying entities.

The dataflows connector in Power BI desktop is a default connector. Once we connect, you will get an overview of all your workspaces which does include dataflows and all underlying entities.

Let’s assume that I do have three Power BI workspaces and each one contains a dataflow. All entities do have the same name and same underlying table name. Since we already recognize the environment by the workspace name, I always use the same entity and tables names across all DTAP stages.

As an example, I just selected the table PimpYourDataflowConnector in the development workspace and loaded this one. When we take a look at the generated code by Power Query in the advanced editor, it will look something like this:

let Source = PowerBI.Dataflows(null), #"12a34567-1ab2-1234-a123-1a2b34cde567" = Source{[workspaceId="12a34567-1ab2-1234-a123-1a2b34cde567"]}[Data], #"9z8yx765-zyx9-98z7-9zy8-zyxw987vu654" = #"12a34567-1ab2-1234-a123-1a2b34cde567"{[dataflowId="9z8yx765-zyx9-98z7-9zy8-zyxw987vu654"]}[Data], PimpYourDataflowsConnector = #"9z8yx765-zyx9-98z7-9zy8-zyxw987vu654"{[entity="PimpYourDataflowsConnector"]}[Data] in PimpYourDataflowsConnector

Looking at this generated code, it is containing my workspaceId and dataflowId hard coded. Actually, the first line in this code where the function PowerBI.Dataflows(null) is called, is probably the most interesting one. This step returns all dataflows where the logged-in user has access to on tenant level.

Make it flexible!

Power Query is an expression-based language. Every step has its own result, which is called as an input for the step afterwards. Looking at the code, we also see some filters applied, for example in the source step, where we filter the column workspaceId on the value called afterwards. Besides that, we see [Data] called after the filter. With this, Power Query will only return the result in the column called Data.

Now, we know this, we can build our own logic which makes it easier to switch between the dataflows in different workspaces. We will do this by following the below steps:

- As first step we call the connector PowerBI.Dataflows(null). As already mentioned, this will result in a list of all our dataflows across the tenant where the logged-in user has access to.

- Second, we create a parameter for our workspace name which we will use for filtering in the next step. The parameter should be type text to make the filter work. Of course, it can be handy to add all possible results as a list in the parameter. But know that you won’t be able to see this list when you want to change the parameter in the Power BI Service.

Read more about changing parameters in the Power BI service in my previous blog post about Parameterize your datasource. - Next is to apply the created parameter as a filter to our table which includes all dataflows. We can do this by simply adding a filter with the dropdown on the column workspaceName and apply the following:

- Now, we only have one workspace left. We want to have the tables included in the data column. We can get this data by drilling down on the Data column. But if we do so, Power Query will still apply a filter based on the workspaceId. To avoid that, we will right-click on the Data column and do remove other columns.

- Only the Data column is left so far. As a next step, we will expand the Data column to get all underlying content and expand all columns.

As a result, we will see all available dataflows for the specified workspace. In this example that is only one dataflow, but this could result in multiple dataflows, so multiple rows.

As a result, we will see all available dataflows for the specified workspace. In this example that is only one dataflow, but this could result in multiple dataflows, so multiple rows. - We can do a simple drill down now, by clicking on Table in the column which is called Data.1. If we do, Power Query will create a step with the following code:

#”Expanded Data”{0}[Data.1]

The part where you see {0} means that it returns the result for the first row. (Power Query starts counting at zero). For now, this works. But maybe we add new dataflows in the future which might impact the order of rows. As a result of that, the first row might be different, and return a different dataset.To avoid issues like this, please first add a filter where you only select the required dataflow. You can do this again by using a parameter. I just hardcoded filtered the dataflow name here. Simply because my dataflow does have the same name in each stage (Dev / Acc / Prd). - After filtering, we only have one dataflow left. Now, we can easily click the Table element in the Data.1 column to be expanded.

This will result in a list of all underlying entities. - For entities, the same story applies as explained for dataflows in step 6. It is better to first apply a filter based on the entity you want to extract, before clicking the Table object in the Data column.In my example, I applied a filter on the entity column to equal the entity name “PimpYourDataflowsConnector”. This step will result in only one row left. This one row includes the entity you want to extract.

- The last step is to expand the Table object in the Data column. By clicking the Table object, Power Query will again apply a hard-coded filter in the code which we don’t want to. In this case that doesn’t matter so much, because this filter will be the same as we applied in step 8.If we want to optimize this, we can do that with first apply an additional step to remove all columns except the Data column. If you expand the table object afterwards, this will result in the table included in the entity.

Wrap things up

In the previous nine steps, we have optimized our dataflows connector to easily switch over workspaces. This will come in handy when you want to switch your data source by moving to the next stage in your development process.

Looking at the code we generated, you will still have a few things hard coded. That are the dataflowName and entityName. Besides these objects, there are hard-coded column names for expanding in the code as well. In the Expanded data step, that doesn’t make a big difference, since this is all meta data of the dataflows. But our last step, Expanded Data1 contains all column names included in our entity. In case we add another column to our entity in the future, it won’t show up. To make this more flexible, please read my blog about Dynamically expanding and appending tables with Power Query.

To get a final overview, below is all Power Query code which we generated.

let Source = PowerBI.Dataflows(null), #"Filtered Rows" = Table.SelectRows(Source, each [workspaceName] = WorkspaceFilter), #"Removed Other Columns" = Table.SelectColumns(#"Filtered Rows",{"Data"}), #"Expanded Data" = Table.ExpandTableColumn(#"Removed Other Columns", "Data", {"dataflowId", "dataflowName", "dataflowDescription", "Data", "ItemKind", "ItemName", "IsLeaf"}, {"dataflowId", "dataflowName", "dataflowDescription", "Data.1", "ItemKind", "ItemName", "IsLeaf"}), #"Filtered Dataflow" = Table.SelectRows(#"Expanded Data", each [dataflowName] = "DemoEntity"), #"Expand Data.1 Column" = #"Filtered Dataflow"{0}[Data.1], #"Filtered Entity" = Table.SelectRows(#"Expand Data.1 Column", each [entity] = "PimpYourDataflowsConnector"), #"Removed Other Columns1" = Table.SelectColumns(#"Filtered Entity",{"Data"}), #"Expanded Data1" = Table.ExpandTableColumn(#"Removed Other Columns1", "Data", {"Environment"}, {"Environment"}) in #"Expanded Data1"

Template

To make it even easier for you, I generated a template file. Please feel free to download this template file and reuse it. Since I don’t know which columnsnames your dataflow consists of, I’ve added some more logic to the Power Query to dynamically expand the table. As already mentioned in the section before, you can read how to do this in my previous blog post.

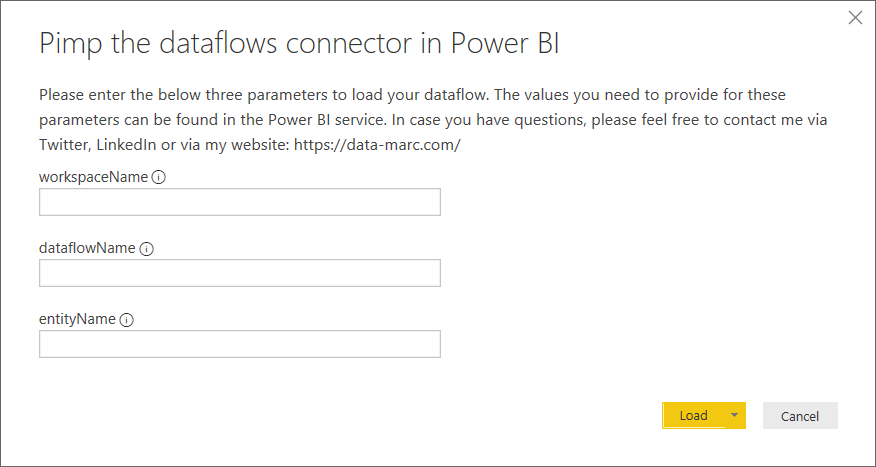

By opening the template file, you will be prompted to enter three parameters. These three parameters will be your workspaceName, dataflowName and entityName. After providing this information, it will load your table.

You can download the template file here.

Awesome post!

For some reason, the data types are lost, and need to be redefined again.

Any suggestions on how to import the data with the same datatypes as defined in the dataflow?

LikeLike

Not sure, but could be that it has something to do with the regional settings and how your data will be interpreted. Did you check those settings?

LikeLike

Did you ever figure this out? I am having the same issue.

LikeLike

To keep data types from original source I used the query below (I don’t take credit, found online) and altered to suit my needs replacing tblToAlter and tblGet with correct steps/records.

#”Retain Types” =

let

tblToAlter = #”Expanded Data1″,

tblGet = #”Removed Columns”[Data]{0},

typetblGet = Value.Type(tblGet)

,NameCols = List.Intersect({Table.ColumnNames(tblGet)

,Table.ColumnNames(tblToAlter)})

,NameFuncTypes = List.Transform(NameCols, (x) => { x

,each {_}{0}

,Type.TableColumn(typetblGet, x) })

,Transform = Table.TransformColumns(tblToAlter, NameFuncTypes)

in

Transform,

If you weren’t altering steps name it should be working in this form, otherwise, alter to your needs 🙂

LikeLike

Awesome post!

For some reason, the data types are lost, and need to be redefined again.

Any suggestions on how to import the data with the same datatypes as defined in the dataflow?

LikeLike

Hi Marc, my company blocks bit.ly links. Could you post a full url to the template?

Thanks!

LikeLike

Sure, I just uploaded it to my github repository as well.

https://github.com/marclelijveld/PowerBIExamples

LikeLike

Pingback: Bring existing tables to Power BI dataflows with Tabular Editor – Data – Marc

be aware that with this approach, your linked entities wont work anymore. They are shown with the icon, but auto-refresh of computed entities on top of linkedentities will not be triggered.

LikeLike

Hi Marc – This is extremely useful to move DF’s across workspaces. I just wanted to check how did you update/edit credentials of the dataset once it has been published to workspace when its using dataflow as the source.

I’m using Appid via powershell to publish the dataset (connected to DF’s). while developing i use my own credentials to authenticate against dataflow but once its published since there is no gateway connection needed, how do you then update/authenticate/signin in power bi service -> dataset -> datasource -> edit credentials automatically via powershell or api? Appid has admin access on the workspace so it has the access but just need to authenticate somehow.

when done manually – Configure xxx dataset screen comes up with

ExtensionDatasource – PowerBI

ExtensionDatasourcePath – PowerBI

Authentication method – OAuth2

Privacy Level setting for this datasource – Private

after clicking sign in button it works fine but is there a way to automate this?

LikeLike

Nice Post !

Perfect base to continue searching …

LikeLike

Hi Marc,

Would something similar be possible with regular Shared DataSets ?

We have Workspaces containing datasets prepared by Data-Architects always a Dev/UAT/PRD version of the workspace. Not just for development and release management but also becasue of data-protection, not everybody is allowed to see Production Data.

I hoped based on the idea of DataFlows to create a comparable WoW but a Report with a linked DataSet doesn’t allow you to alter any setting, nor is it available in TransForm-Data ..

Kr, Harry

LikeLike

Hi Harry,

Short answer is no. But let me explain, Dataflow connects via Power Query, in the query editor. Therefore you can add parameters and change the variables. A dataset connects using, what we call, a live connection.

To solve your challenge, have a look at the Rebind Reporr API. That might help you.

Cheers,

Marc

LikeLike

Be aware that with this approach you are not going to be able to follow Dataflows connections to Dataset in Lineage view. They will appear as disconnected.

LikeLike

Hi Marc and Piotr,

I’ve been working with a client for a while and lineage is very important. I struggled for a while to work out why the lineage wasn’t there for Dataset to Dataflow for some critical datasets. I realised that the consultants who created the dataset utilised Marc’s method for easy switching between Dev, Test and Production.

I found a simple way of getting the lineage to work whilst still retaining most of the simplicity offered by Marc’s excellent method. If you think this is useful I’m happy to share and perhaps if you think it is useful, it can be added to this post?

LikeLike

Please feel free to add 🙂 curious to see what you came up with!

LikeLiked by 1 person

Tried this method and found it works but with the following caveats already described:

– You loose the lineage from dataflow to the dataset.

– Because of the filtering, Power BI Service requires credentials via OSAuth. This does not occurs using the “standard” method accessing PBI Data Flows.

To address this, rather than use the workspace names and Data Flow names, I used the GUIDs as parameters instead. This is not exactly elegant as you need to know the GUIDs for each of the workspaces and dataflows across your environments. However, once you have them, when you deploy your dataset via PBI Pipelines, you can set deployment rules to set the GUIDs for the environment you are moving into. To get the GUIDs just go to the workspace and look at the URL in your browsers address line. The GUID is part of the address. To get the guild for the DataFLow, click the Dataflow within the workspace. Again the Dataflow GUID will appear in the address line.

Below is a same script attempting to retrieve the DataFlow for a Fiscal Calendar:

let

Source = PowerBI.Dataflows(null),

#”WS” = Source{[workspaceId=WorkspaceLocationID_GUID]}[Data],

#”DF” = #”WS”{[dataflowId=DataflowID_FiscalCalendar_GUID]}[Data],

#”Fiscal Calendar” = #”DF”{[entity=”Fiscal Calendar”]}[Data]

in

#”Fiscal Calendar”

WorkspaceLocationID_GUID and DataflowID_FiscalCalendar_GUID are Text parameters defined for the dataset.

LikeLike

Thanks for the extensive reply and addition 🙃

LikeLike

Hello, do you know if there is a way to parameterize the frase “Source = PowerPlatform.Dataflows(null),” in Power Query for Power BI Desktop?

LikeLike

What is your reasoning behind parameterizing that specific section? Cause the line below that includes the Guids where the source is located.

–Marc

LikeLike

I have a lot of DF-references in my power queries, and I am now in the process of changing all of them from PowerBI.Dataflows(null) to PowerPlatform.Dataflows(null). I would prefer centralising this with a parameter in case of any other future changes in the connector from PBI to DF.

LikeLike

I am in the same situation as Michiel van Straten, PowerBI.Dataflows(null) is now a legacy connector being replaced with PowerPlatform.Dataflows(null) but seems hard to parameterize using your method as the workspaces and dataflows containing the new connector are no longer navigatable.

LikeLike

I’ve worked out that the new PowerPlatform.Dataflows connector for datasets is clever enough to hook itself up with upstream dataflows. Make sure to add the dataflow gateway source account to the workspace with viewer permissions to avoid the elusive mashup error.

LikeLike

Hi Marc,

My solution to the lineage problem is a little similar to JoeKoeJr’s. I tried his solution and it didn’t seem to get the lineage working again for me (could be my mistake).

I’ll describe the problem statement for anyone unfamiliar with this issue:

– Where organisations have a Dev, Test and Production version of a dataflow (all connecting to different dataflows, Marc’s solution offers a fantastic way of easily switching between those source dataflows. Just a simple switch between a “Dev, Test or Prod” value in a parameter in the deployment pipeline.

– Unfortunately though, this method does stop the lineage view from working properly – meaning that it is not possible in Purview or Power BI to see how the data flows from a source like SQL Server to a dataflow and then finally into the dataset/reports. Lineage is a critical requirement at my client organization.

– However, I didn’t want to change the methodology used to connect to the dataflows because there were 80+ queries in this particular dataset all pointed to the staging query which lists the dataflow entities. I didn’t want to have to change every query, so I wanted to make the staging query produce the same end result, with each subsequent query then filtering based on the entity that it pulls from.

Solution:

– I added new parameters which contain WorkspaceID and DataflowID.

– I changed the Staging Query to refer to these IDs and also changed the methodology of the query to use the standard dataflows connector. Here’s the M code:

let

Source = PowerPlatform.Dataflows(null),

Workspaces = Source{[Id=”Workspaces”]}[Data],

#”Filtered Rows” = Table.SelectRows(Workspaces, each ([workspaceId] = WorkspaceID)),

#”Removed Other Columns” = Table.SelectColumns(#”Filtered Rows”,{“Data”}),

#”Expanded Data” = Table.ExpandTableColumn(#”Removed Other Columns”, “Data”, {“dataflowId”, “dataflowName”, “dataflowDescription”, “Data”, “ItemKind”, “ItemName”, “IsLeaf”}, {“dataflowId”, “dataflowName”, “dataflowDescription”, “Data.1”, “ItemKind”, “ItemName”, “IsLeaf”}),

#”Filtered Dataflow” = Table.SelectRows(#”Expanded Data”, each [dataflowId] = DataflowID),

#”Expand Data.1 Column” = #”Filtered Dataflow”{0}[Data.1]

in

#”Expand Data.1 Column”

Unfortunately this approach still didn’t uncover the lineage (surprisingly). I could have changed back to the old methodology from Marc, but I kept the change above because I wanted everything to react to a change in the Workspace/Dataflow ID in the deployment pipeline (see below).

– To get the lineage, I then added a small/simple “workaround”. I added a new query called a “lineage query” – which connects to a very simple entity from the underlying dataflow and then filters to a single row and single column (to avoid dataset bloating). I put a detailed explanation of this in the “properties” in PQ. I also hid this in the report view. You would have to do this for each dataflow that the dataset connects to. (Note that this approach makes sense mostly where you have a lot of queries connecting to many different entities of a single dataflow – which was what I had).

– I then maintained the correct Workspace and Dataset IDs in each stage of the deployment pipeline.

I felt that this approach worked well because you get the lineage back with only a small increase in difficulty when moving from Dev to Test to Production. Our developers have access to a catalogue of dataflows from the API which gives them easy access to Workspace IDs and Dataflow IDs so this isn’t too hard for them.

Hope this helps someone. Happy to be told it is rubbish and given a better idea :).

Rob

LikeLike

Thanks for such a detailed explanation Rob! Appreciate your time and effort 🙂

LikeLiked by 1 person

Thanks Marc. Hope it helps someone!

LikeLike

Do you know if you can specify the tenant that the PowerPlatform.Dataflows() connector uses. For example, I see in the Data column of the Environment row of the PowerPlatform.Dataflows() grid, there is a reference to the groupId, which is the tenant id. We have guest users on our tenant that have their own tenant as well, so those guest users can’t get to our dataflows. I was hoping to pass to the .Dataflows() connector the tenant id in some way, any thoughts?

Something like:

= PowerPlatform.Dataflows(“ctid=<some url or guid>”)

Brent.

LikeLike

Hi Brent,

The connector does not support this parameter or anything similar. However, they can re-authenticate in the dataflow connector. By default this will point them to their home tenant, but I suggest using the trick explained to publish from Power BI desktop to guest accounts, I assume this also applies to connecting to dataflows. I didn’t test it though… see this blog to follow the steps: https://data-marc.com/2022/06/20/power-bi-b2b-external-collaboration/

—Marc

LikeLike