Basically for every data model, but in particular for large and enterprise-grade data models, it might be wishful to perform backups. Power BI Premium offers a way to perform backup and restore operations for Power BI datasets. This feature, coming from Azure Analysis Services, helps you to take a backup of your dataset logic as well as the data itself and the ability to restore in case your dataset gets corrupted or backend processes have failed. Furthermore, this backup and restore process is also a way to migrate your datasets from Analysis Services to Power BI Premium.

In this blog, I describe what is needed for Power BI backup operations, how you can perform them and how to script / automate them.

Backup & restore

Power BI Backup and Restore is a capability coming from Analysis Services. In Analysis Services, you could run a backup of your data model which results in an *.abf file. This file, containing both data model logic and the data itself, could easily be created using SQL Server Management Studio. In a similar easy way as running a backup, restore operations can be performed.

As I do not want to repeat the Microsoft Documentation, I recommend to first read the documentation about Backup & Restore datasets with Power BI Premium in case you are not familiar with the concept.

Why should you perform backup operations?

Although Power BI is a SaaS offering, there is a guarantee on uptime of the service, but there is no responsibility at Microsoft for having a complete representative dataset in the service. Obviously this responsibility relies at the dataset owner.

Taking backups of your dataset is one of the things you can do as a dataset owner to work on your solution stability. There might be unfortunate scenarios where your dataset gets corrupted, or showing incomplete data due to failures in backend processes. Imagine your data warehouse is having issues, due to which there is an incomplete set of data in your Power BI dataset after refresh. As Power BI dataset owner you strive to serve your users correct and complete information in your dataset.

In case situations as described above occur, you can take benefit from restore operations in case you regularly take a backup of your dataset. By rolling back to the previous version of your dataset, you can make sure your users work with the most up-to-date complete set of data.

Of course, you do want to benefit from automated processes rather than maintaining a local pbix file which you download from the service every day, or refresh locally. With Power BI Backup and Restore you can automate backup processes. Let’s have a look at what you need and how you can perform these operations.

Where should I get started?

Let’s first have a look at some prerequisites before you can get started. It all begins with the your Power BI Service Administrator who must enable the workspace-level storage permissions in the tenant settings. Then, good to know is that the backup process of Power BI datasets is available for Power BI Premium only. Any type of Premium licensing (Premium per User and Capacity) allows you to take advantage of this feature. Besides the need for Premium, your Power BI workspace must be bind to a Azure Data Lake gen2 (ADLS gen2). This is a native integration of the Power BI Service which not only allows you to run backup operations, but also to store your dataflows on your own Data Lake storage. Perhaps unnecessarily, but of course you must have sufficient permissions to get this configuration working. Below a short overview of what you need to have;

On the Power BI side

- On the Power BI Workspace, you need to be a workspace administrator to configure the workspace connection to ADLS gen2.

- To perform backups, you must be admin, member, or contributor to the workspace.

- Restore backups to existing datasets, you must be owner of the dataset.

- Restore backups as new datasets, you must be admin, member or contributor to the workspace.

ADLS gen2 permissions

- The workspace administrator must be Azure Blob Data Contributor and Storage Blob Data Owner to connect the ADLS gen2 storage to the Power BI Workspace

Other prerequisites to connect ALDS gen2 to Power BI can be found in this documentation about dataflow storage to use Azure Data Lake Gen 2, which is the same ADLS storage as used for backup and restore in Power BI.

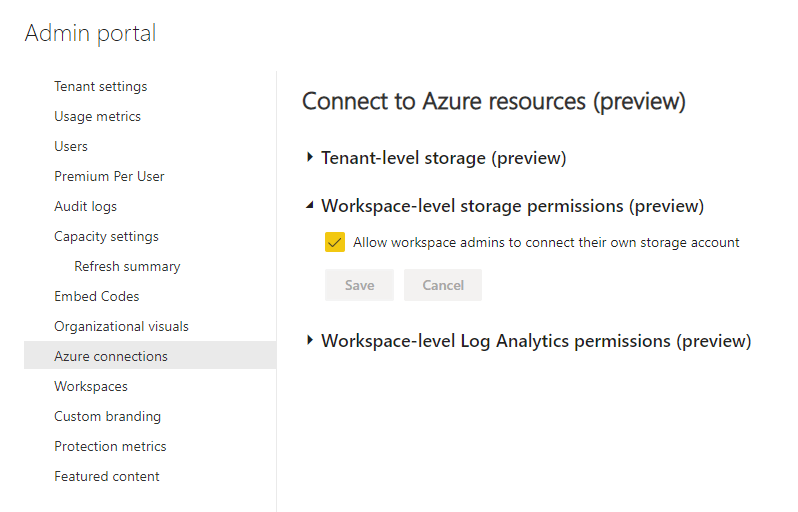

For the Power BI tenant administrator

In case you are a Power BI tenant administrator, then you have to enable workspace-level storage permissions first in the tenant settings. You can find this in the Azure connections tab. But before you do, kindly take a few things in consideration.

Please be aware of the difference in Tenant-level storage and Workspace-level storage! In case of the first, all the dataflows and all backups in your organization end up at the same storage account, which can be undesirable. For example, your finance data, HR data and tons of other sources will be saved in the same place. My advice would be to only use the Workspace-level storage permissions and not use the Tenant-level storage.

Furthermore, also have a look at the prerequisites which describe that;

- ADLS Gen 2 storage account cannot be behind a firewall.

- ADLS Gen 2 storage account cannot be located in a VNET.

- Attaching a dataflow with ADLS Gen 2 behind multifactor authentication (MFA) is not supported.

- As tenant administrator, you cannot limit this feature to a subset of users in your organization listed in a security group.

Automate the backup process

In this blog, I will not explain how you can manually perform backups, nor how you can restore a backup. Simply because that is fairly easy and well documented. For all manual operations you can use SQL Server Management Studio (v18.9 or up). But also other tools that allow you to perform actions over XMLA endpoints could work. Gilbert Quevauvilliers (Fourmoo) wrote an excellent post with a step-by-step guide on how to configure and perform backups.

If you have read earlier blogs from me, you might know that I like to automate some stuff with PowerShell, and so I will to automate the backup process of Power BI datasets. To get this script running, we need to have the PowerShell cmdlets for SQL Server installed. As part of the script, it will check whether the cmdlets module is available on your machine.

In the script, you first have to specify two variables, begin the Workspace name and the dataset name. The workspace name will be used to concatenate with the default part to form the XMLA endpoint which the script will use to connect to Power BI.

# Run parameters, please specify below parameters

$WorkspaceName = "DEMO%20-%20Backup%20data%20model"

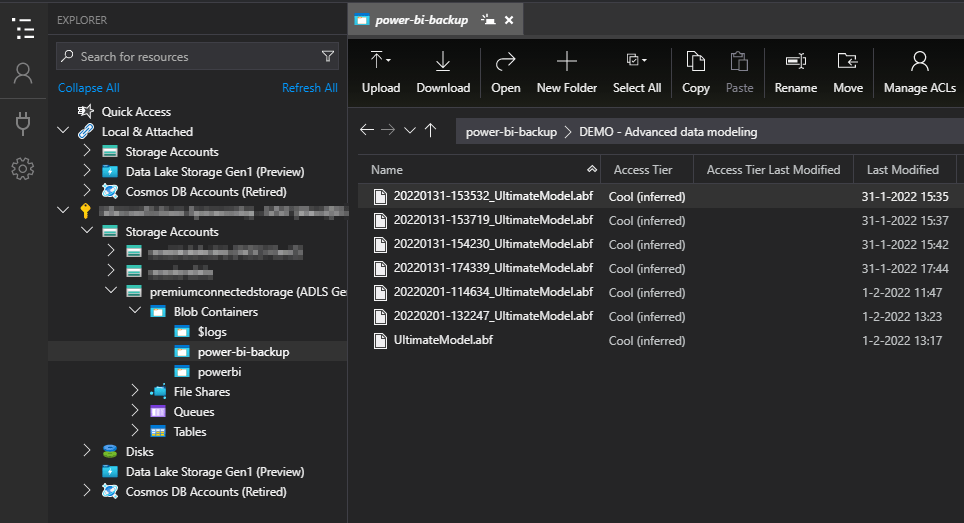

$DatasetName = "Example Model" Furthermore, as you might perform multiple backups, the name of the backup will be automatically generated based on the date and time of script execution, concatenated with the dataset name. This results in the name how the *.abf file will appear in the ADLS storage.

In the body send over the XMLA endpoint a few variables are defined to form the backup command. All variables and explanation can be found in this documentation.

{

"backup": {

"database": "$DatasetName",

"file": "$BackupFileName.abf",

"allowOverwrite": false,

"applyCompression": true

}

}At script execution time, a prompt will ask you to authenticate. Here you can use your account to authenticate to Power BI to perform the backup operation.

The full script can be downloaded on GitHub here.

After backup, the files will appear in the Azure Data Lake Storage. You can easily explore this using Azure Storage Explorer or the explorer in the Azure Portal. Below the results from an example I have setup.

Next steps

The script provided is still something to manually execute as there is an authentication prompt. However, this helps you to get started automating the backup process end to end. You can consider to automate the backup process in Azure Automation.

The better and preferred option should be to integrate the backup process in your generic orchestration pipelines. For example if you trigger Power BI refreshes from Azure Data Factory, first perform a backup then trigger a refresh operation. For inspiration, have a look at this blog post which describes how you can setup a similar process.

Last but not least, in case you want to perform backups for multiple datasets at the same time, a small modification to the script is required. Amish Shah wrote a blog on how you can run multiple backup operations in batch. Its a bit older blog focused on Analysis Services, but still works today.

Hope this helps you to get started automating your Power BI dataset backups.

Pingback: Scripting and Automating Power BI Backups – Curated SQL